- Amal Dorai

- Aug 19, 2022

- 6 min read

Updated: Aug 28, 2022

Highlights from Anorak Ventures' event with newkid at LA Tech Week 2022.

As a seed-stage venture capital firm investing in emerging technology, we meet incredibly smart technical founders who have created astounding technologies and products. However, we regularly see these founders struggle to connect with an audience and get that audience from "spectator" to "customer." We've worked with many of our portfolio companies on marketing and messaging to help these great products become great companies, and we partnered with branding agency newkid to capture some of these lessons at our talk "PERCEPTION IS REALITY" at this year's LA Tech Week. We've summarized that talk below.

1. PEOPLE AREN'T NUMBERS. Startups often define their target customers in terms of broad demographic slices, like "fitness-oriented women" or "services businesses under $10 million in revenue." These are a good start to help narrow your focus, but you can't build a company around this kind of audience without a deeper understanding of who they are.

This is the first, and most important, step towards developing your company's positioning. You need to understand your customers more broadly than how they would interact with your product. You need to meet them, talk to them, and understand them without trying to sell them anything.

If your company becomes very successful, your company, and you personally, will have a leadership role in your industry, the way that Tim Cook at Apple or Satya Nadella at Microsoft are important figures in the global technology industry. Just like you wouldn't run for President of a country without understanding its people, its history, its music, its movies, its stories, its food, its culture, etc... make sure you understand every aspect of your customer, not just those related to your business.

Homework: Spend a day doing customer service. Join a subreddit. Jump into a mosh pit.

2. NO IS THE KEY WORD. As a startup, it's hard to say no, especially to a customer who wants a specific feature and has an open checkbook and is ready to pay for it. A customer might truthfully say, "I'd buy this if it had SAP integration!" And they might pay you enough to cover the costs of building it, and then some. But a startup's biggest advantage is focus, and that SAP integration delays the rest of your vision, creates openings for competition to enter, and makes your product more complex, and thus worse, for the rest of your customers who don't need it.

That's not to say that you shouldn't listen to your customers, but your customer feedback shouldn't go directly into your backlog. Your deep insight into your customer (see point 1) is what gives you the confidence to set the vision for your company yourself, and stay true to it. After all, if you're just building what your customer tells you to, you're building someone else's company for them.

Homework. Delete 5 user requests. Move them from Priority 2 to "Priority Never."

3. CLARITY IS KING. Good startup founders know their markets better than anyone else in the world, and hire other people who are also deep domain experts. But when they communicate to their market, they can fall into the trap of being too abstract or too vague in their messaging.

Vague marketing phrases like "Reimagine your X stack" assume that someone is thinking about X, understands what an "X stack" is, and even wants to "reimagine" it -- it's likely that none of these are true!

A startup needs a message so clear that anyone in your target audience immediately understands what your company does and why they would want your product.

This is an area where startups have a strong advantage over large companies. Large companies sell many different products to many different types of customers, so their marketing is necessarily vague. But when you look at the most successful companies when they were smaller, you can find the focus and clarity it takes to succeed as a startup. For example, Twilio's home page in 2010 describes exactly what it sells: a "web-service API" to "voice-enable your apps" or provide "text messaging for your apps."

12 years later, in 2022, Twilio is a public company with $3 billion in revenue, selling hundreds of different products. Their home page is necessarily vague, referring to "data-driven customer engagement at scale."

Don't look to modern megacorporations for clues about how to market your startup -- look at how they marketed themselves when they were your size. Find a way to describe your company that is so clear that your most clueless investor (hopefully that's not us!😄) could sell your product for you.

Homework: Ask your best friend to describe your startup in one sentence.

4. ATTENTION IS EARNED. People will read a 10,000 word magazine article about Apple or Facebook because people already know those companies and want to learn more about them. But as a startup, you're just one of a million things that people could be paying attention to. Why should they pay attention to you? You need to have a first sentence that earns the user's attention and gets them to read the second sentence.

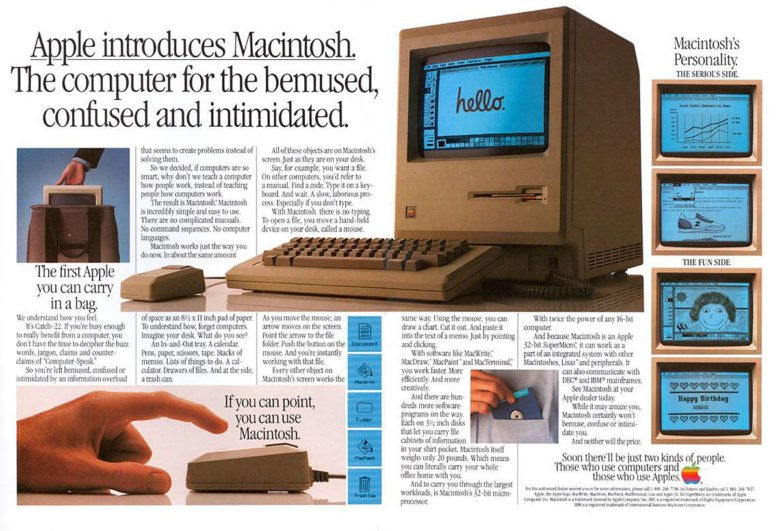

A great example of this is Apple's original advertising for the Macintosh 38 years ago, when many people found computers perplexing and intimidating:

There's a LOT of information in this advertisement, but the opening tagline gives the target audience a reason to read further.

Homework: Write a 1-2-4 pitch for your company. One sentence to make your audience care. Two more sentences to explain what you do. Four more sentences to support your claims.

5. EDUCATION IS EXPENSIVE. You have 30 seconds to talk to a customer who's been alive and learning things for 30 years -- instead of teaching them everything about your product from scratch, it's far more effective to tap into knowledge that they already have.

The "Uber for X" marketing approach is definitely a bit tired, but it existed for a reason -- instead of having to explain the concept of an on-demand app, the "Uber for X" formulation more concisely tells your customer what they need to know.

Homework: Formulate your company in terms of one or two concepts that your customer already knows.

6. WHAT GETS REPEATED GETS REMEMBERED. A customer won't remember something after seeing it once. The way to go from a brand impression to brand recognition is consistency and repetition:

As a startup founder, you'll be giving your pitch thousands of times, across multiple channels like video, Web, and in-person. Saying the same thing thousands of times can get exhausting, but resist the temptation to "change it up" or "keep it interesting." Definitely don't just have canned responses to all situations, but find your handful of key phrases and visuals, and use them across every situation.

Your target customer is hearing your pitch for the first time, so make sure they're getting your best material.

Homework: Pull up your website, app, social media, emails, pitch deck, etc. Do they all look and sound like they came from the same brand?

7. YOU'RE NOT ALWAYS THE EXPERT. Founders are accustomed to "doing it themselves," but an important part of being an effective founder is knowing when you need a domain expert. You wouldn't try to build your company's iOS app if you've never written a line of code, but we see founders trying to build their startup's pitch deck with built-in PowerPoint themes.

If you had to make a single three-point basketball shot and your life depended on it, would you take the shot yourself, or hire Steph Curry to take it for you? Of course you'd hire Steph Curry, and he doesn't work for free.

As in any market, you don't always get what you pay for just by paying for it. Find a trusted referral to a branding and marketing agency that has done work that you respect. Set clear guidelines on expectations, and if you're unsure about fit, start with small deliverables before moving into bigger projects.

Homework: Ask for referrals to three branding or marketing agencies from founders whose branding and marketing you hold in high regard.

8. PERCEPTION IS REALITY. Your customer often has to invest a huge amount of trust in you, by providing their personal information, medical record, banking passwords, or even access to their home, and they have very little information to go from. Is your server's root password "password"? They can't assess your trustworthiness directly, so they index very strongly on how you present yourself.

What to you is a spelling mistake is a red flag to a customer. Poor attention to detail is the quickest way to lose hard-earned customer trust.

Homework: Check every word, every pixel, every button, every interaction... and do it again next week.

In summary: INSIGHT FOCUS CLARITY PERSUASION FAMILIARITY CONSISTENCY EXPERTISE TRUST

Learn more about Anorak Ventures at https://anorak.vc or Newkid at https://newkid.services.